Your Cron Jobs Can't Think. These Can.

How to schedule AI workflows with cron, chain LLM calls with tool nodes, and send a Telegram briefing — automatically, every day.

Every morning, the same ritual: open five tabs, skim headlines, paste into an LLM, wait for a summary, copy it to a file, forward it to Telegram. Manual. Repetitive. Skippable when you're busy — and that's exactly when you need it most.

I automated the entire thing — including the LLM call — in one TOML file. No Python. No Bash glue. No three separate cron entries. Zenii runs the whole pipeline on a schedule, passes outputs between steps, and fires off the Telegram message before I've poured my first coffee.

Here's exactly how it works.

The Workflow: Daily LLM Digest

Four steps. One schedule. The workflow fetches top news, summarises it with an LLM into a 5-bullet briefing, then fans out in parallel — saving the result to a local file and sending it to Telegram at the same time.

id = "daily-news-digest"

name = "Daily LLM Digest"

description = "Fetches top news, produces an LLM-summarized briefing, and sends to Telegram"

schema_version = 1

schedule = "15 11 * * *"

[[steps]]

name = "fetch_news"

type = "tool"

tool = "web_search"

args = { query = "top technology news today" }

[[steps]]

name = "summarize"

type = "llm"

prompt = "You are a news editor. Summarize the following search results into a concise 5-bullet briefing. Use markdown formatting.\n\n{{steps.fetch_news.output}}"

depends_on = ["fetch_news"]

[[steps]]

name = "save_briefing"

type = "tool"

tool = "file_write"

depends_on = ["summarize"]

args = { path = "/tmp/zenii/daily-briefing.md", content = "{{steps.summarize.output}}" }

[[steps]]

name = "notify_telegram"

type = "tool"

tool = "channel_send"

depends_on = ["summarize"]

args = { action = "send", channel = "telegram", message = "📰 *Daily News Digest*\n\n{{steps.summarize.output}}" }

[layout]

fetch_news = { x = 100.0, y = 0.0 }

summarize = { x = 400.0, y = 0.0 }

save_briefing = { x = 700.0, y = 0.0 }

notify_telegram = { x = 700.0, y = 150.0 }

How the Steps Connect

Zenii builds a directed acyclic graph (DAG) from the depends_on declarations. Cycles are rejected at save time. Steps with no dependencies run first; everything else waits for its upstream steps to complete.

In this workflow:

fetch_newsruns immediately — no dependenciessummarizewaits forfetch_news, then injects its output into the LLM prompt via{{steps.fetch_news.output}}save_briefingandnotify_telegramboth depend onsummarize— they execute in parallel, so the file write and the Telegram message happen at the same time

The [layout] section stores x/y coordinates for each node on the visual canvas. It has no effect on execution order — that's determined entirely by depends_on.

The template syntax {{steps.step_name.output}} works in any field — LLM prompts, tool args, condition expressions. You can also reference {{steps.step_name.success}} and {{steps.step_name.error}} for flow control.

For full DAG mechanics, retry policies, and failure handling, see Zenii Workflow Scheduling Documentation.

Create It in Plain English

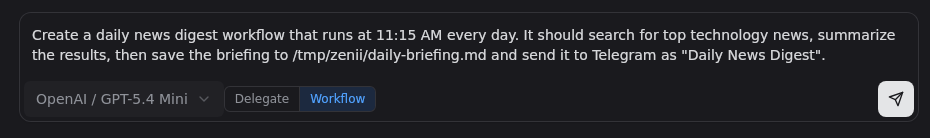

You don't have to write the TOML by hand. Describe the workflow in plain English in Zenii's chat interface — it generates the full TOML, wires the depends_on graph, and sets the schedule for you.

Type what you want. Zenii generates the TOML, builds the graph, and registers the schedule.

Type what you want. Zenii generates the TOML, builds the graph, and registers the schedule.

The daily digest above was created from a single prompt:

"Create a daily news digest workflow that runs at 11:15 AM every day. It should search for top technology news, summarize the results, then save the briefing to /tmp/zenii/daily-briefing.md and send it to Telegram as 'Daily News Digest'."

The TOML in this post is exactly what Zenii produced. You can edit it afterward — or just describe a change and let the chat update it.

The Schedule Field

schedule = "15 11 * * *" registers the workflow with Zenii's built-in cron scheduler. Standard five-field cron syntax: minute hour day month weekday.

| Expression | Meaning |

|---|---|

15 11 * * * | Every day at 11:15 AM |

0 9 * * 1-5 | Weekdays at 9 AM |

*/30 * * * * | Every 30 minutes |

0 8 1 * * | First of every month at 8 AM |

Zenii also supports interval syntax for simpler cases:

schedule = "every 300s" # run every 5 minutes

And one-shot jobs that auto-delete after a successful run:

schedule = "0 10 * * *"

one_shot = true

The scheduler persists across daemon restarts via SQLite. Missed runs are tracked, and failures retry with exponential backoff: 30s → 60s → 5m → 15m → 1h.

The Node Palette

The type = "tool" step type connects to every node Zenii ships. The type = "llm" step type is its own first-class citizen — it takes a prompt field and calls your configured AI provider directly.

Here's the full palette:

| Category | Node | What it does |

|---|---|---|

| AI | llm_prompt | Run a prompt against your configured AI provider |

| Search | web_search | Search the web and return ranked results |

| Search | wiki_search | Query your Zenii wiki knowledge base |

| System | system_info | Read CPU, memory, and OS details |

| System | shell | Execute a shell command and capture output |

| System | process | Start, stop, or inspect OS processes |

| Files | file_read | Read a file's contents |

| Files | file_write | Write content to a file |

| Files | file_search | Search files by name or pattern |

| Files | file_list | List directory contents |

| Files | patch | Apply a unified diff patch to a file |

| Memory | memory_store | Write a key-value fact to long-term memory |

| Memory | memory_recall | Retrieve from memory by semantic query |

| Memory | memory_forget | Delete a memory entry by key |

| Channels | channel_send | Send a message to Telegram, Slack, or Discord |

| Config | config_read | Read a value from Zenii's config |

| Config | config_update | Update a config value at runtime |

| Flow Control | delay | Pause for N seconds (useful for rate limiting) |

| Flow Control | condition | Branch on a boolean expression |

The wiki_search node is worth calling out: it queries your local Zenii wiki — your own indexed documents, notes, and saved knowledge — and returns relevant passages. Combined with an llm step, your scheduled workflow can synthesize current web results against your private knowledge base. See Stop Rereading Your Documents. Let the AI Study Them Once. for how to build and populate your wiki.

More Workflow Ideas

A few quick sketches using different parts of the palette.

Weekly code health check — run tests on a schedule, only alert on failure:

id = "weekly-test-check"

schedule = "0 9 * * 1"

[[steps]]

name = "run_tests"

type = "tool"

tool = "shell"

args = { command = "cargo test 2>&1" }

[[steps]]

name = "check_result"

type = "tool"

tool = "condition"

depends_on = ["run_tests"]

args = { expression = "{{steps.run_tests.success}}", if_false = "alert" }

[[steps]]

name = "alert"

type = "tool"

tool = "channel_send"

depends_on = ["check_result"]

args = { channel = "telegram", message = "Tests failed:\n\n{{steps.run_tests.output}}" }

Memory-augmented research digest — search, summarize, and remember for next time:

id = "research-memory"

schedule = "0 18 * * *"

[[steps]]

name = "search"

type = "tool"

tool = "web_search"

args = { query = "Rust async runtime updates this week" }

[[steps]]

name = "summarize"

type = "llm"

prompt = "Summarize in 3 sentences:\n\n{{steps.search.output}}"

depends_on = ["search"]

[[steps]]

name = "remember"

type = "tool"

tool = "memory_store"

depends_on = ["summarize"]

args = { key = "rust-weekly-{{date}}", value = "{{steps.summarize.output}}" }

Config-driven topic digest — change the search topic from config without touching the workflow:

id = "topic-digest"

schedule = "30 8 * * *"

[[steps]]

name = "get_topic"

type = "tool"

tool = "config_read"

args = { key = "digest.topic" }

[[steps]]

name = "search"

type = "tool"

tool = "web_search"

depends_on = ["get_topic"]

args = { query = "{{steps.get_topic.output}} news today" }

[[steps]]

name = "summarize"

type = "llm"

prompt = "Summarize the key points:\n\n{{steps.search.output}}"

depends_on = ["search"]

[[steps]]

name = "send"

type = "tool"

tool = "channel_send"

depends_on = ["summarize"]

args = { channel = "telegram", message = "{{steps.summarize.output}}" }

Update the topic with zenii config set digest.topic "machine learning" and the next run picks it up.

Run It

Save the workflow file and register it:

zenii workflow create digest.toml

Test it immediately without waiting for the cron:

zenii workflow run daily-news-digest

Check the run history with per-step timing and output:

zenii workflow history daily-news-digest

List all scheduled workflows and their next fire times:

zenii schedule list

You can also trigger any workflow over HTTP from a CI pipeline, webhook, or MCP agent:

curl -X POST http://localhost:18981/workflows/daily-news-digest/run \

-H "Authorization: Bearer $TOKEN"

One TOML file, one cron expression, one Zenii process. Your LLM pipeline runs while you sleep.

Full scheduling docs: https://docs.zenii.sprklai.com/scheduling

GitHub: https://github.com/sprklai/zenii — MIT licensed, open source.

If you build something with it, drop a link in the comments.